How to Protect Your Application from AI Bots

- Share:

2938 Members

Bots have traditionally been something we try to prevent from entering our applications. There were, of course, always good (or at least useful) bots as well, such as search engines and test automation scripts, but those were always less common compared to malicious ones.

In the past year, since LLMs and GPT have become a major part of our lives, we’ve found ourselves with a whole new set of bots that don’t really fit the categories previously known to us.

These machine identities are welcomed into our applications by users who want to get some help from GenAI, but that doesn’t change the fact that they should be a concern for us as developers who are trying to protect our data and users.

These new entities can be harmful from many security and privacy perspectives, and we need to know how to handle them.

In this blog, we will evaluate the presence of GenAI identities in our applications and show how to create a smart layer of fine-grained authorization that allows us to integrate bots securely into our applications. To do that, we’ll use two tools: Permit.io and Arcjet, to model and enforce these security rules in our applications.

We’ve always had a very clear distinction between human and machine identities. The methods used to authenticate, authorize, audit, secure, and manage those identities have been very different. In security, for example, we tried to strengthen human identities against human error (i.e. Phishing, Password Theft), while with machine identities, we mostly dealt with misconfiguration problems.

The rise of GenAI usage has brought new types of hybrid identities that potentially can suffer from problems in both worlds. These new hybrid identities consist of machine identities that adopt human attributes and human identities that adopt machine identities.

From the machine side, take Grammarly (or any other grammar AI application) as an example. Grammarly is a piece of software that is supposed to be identified as a machine identity. The problem? It’s installed by the application user without notifying the developers of a new type of machine identity in the system. It gets access to tons of private data and (potentially) can perform actions without letting the developers deal with the usual weaknesses of machine identities.

On the other hand, from the human identity side, GenAI tools give human identities the possibility of adopting machine power without having the “skills” to use it. Even a conventional subreddit like r/prompthacking provides people with prompts that can help them use data in a way that no one estimates a human identity can perform. It's a common practice to have less validation and sanitization on API inputs than in the UI, but if users can access those APIs via bots, they can abuse our trust in the API standard.

These new hybrid identities force us to rethink the way we are handling identity security.

One of the questions we need to re-ask when securing our applications for such hybrid identities is ‘Who is the user?’. Traditionally, that was an authentication-only question. The general authorization question of ‘What can this user do?’ fully trusts the answer of the authentication phase. With the new hybrid identities that can easily use a non-native method to authenticate, trusting this binary answer from the authentication service can lead to permission flaws. This means we must find a new way to ask the “Who” question on the authorization side.

In order to help the authorization service better answer this detailed "Who" question, we need to find a proper method to rank identities by multiple factors. This ranking system will help us make better decisions instead of using a traditional good/malicious user ranking.

When considering the aspects of building such a ranking system, the first building block is to have a method to rank our type of identity somewhere between machine and human. In this article, we will use Arcjet, an open-source startup that builds application security tools for developers and, more specifically, their ranking system. The following are the different ranks of identities between good and malicious bots, which could help us understand the first aspect of the new Who question.

NOT_ANALYZED - Request analysis failed; might lack sufficient data. Score: 0.AUTOMATED - Confirmed bot-originated request. Score: 1.LIKELY_AUTOMATED - Possible bot activity with varying certainty, scored 2-29.LIKELY_NOT_A_BOT - Likely human-originated request, scored 30-99.VERIFIED_BOT - Confirmed beneficial bot. Score: 100.As you can see, each rank level also has a detailed score, so we can detect even more for the levels we want to configure.

Assuming we can rank our user activity in real-time, we will have the first aspect required to answer the question of “Who is the user?” when we make the decision of “What can they do?” Once we have this ranking, we can also add more dimensions to this decision, making it even more sophisticated.

While the first aspect considers only the user and its behavior, the others will help us understand the sensitivity of the particular operation that a user is trying to perform on a resource. Let’s examine three methods that can help us build a comprehensive policy system for ranking hybrid identity operations.

Thinking of our new ranking system as multidimensional ranking can even help us mix and match our ranking systems for a fine-grained answer to the ”What” question that takes the “Who” question into account.

Let’s model such a simple ranking system in the Permit.io fine-grained authorization policy editor.

While Permit.io allows you to build a comprehensive multi-dimensional system with all the dimensions we mentioned, for the sake of simplicity in this tutorial, we will build a system based only on conditions and ownership.

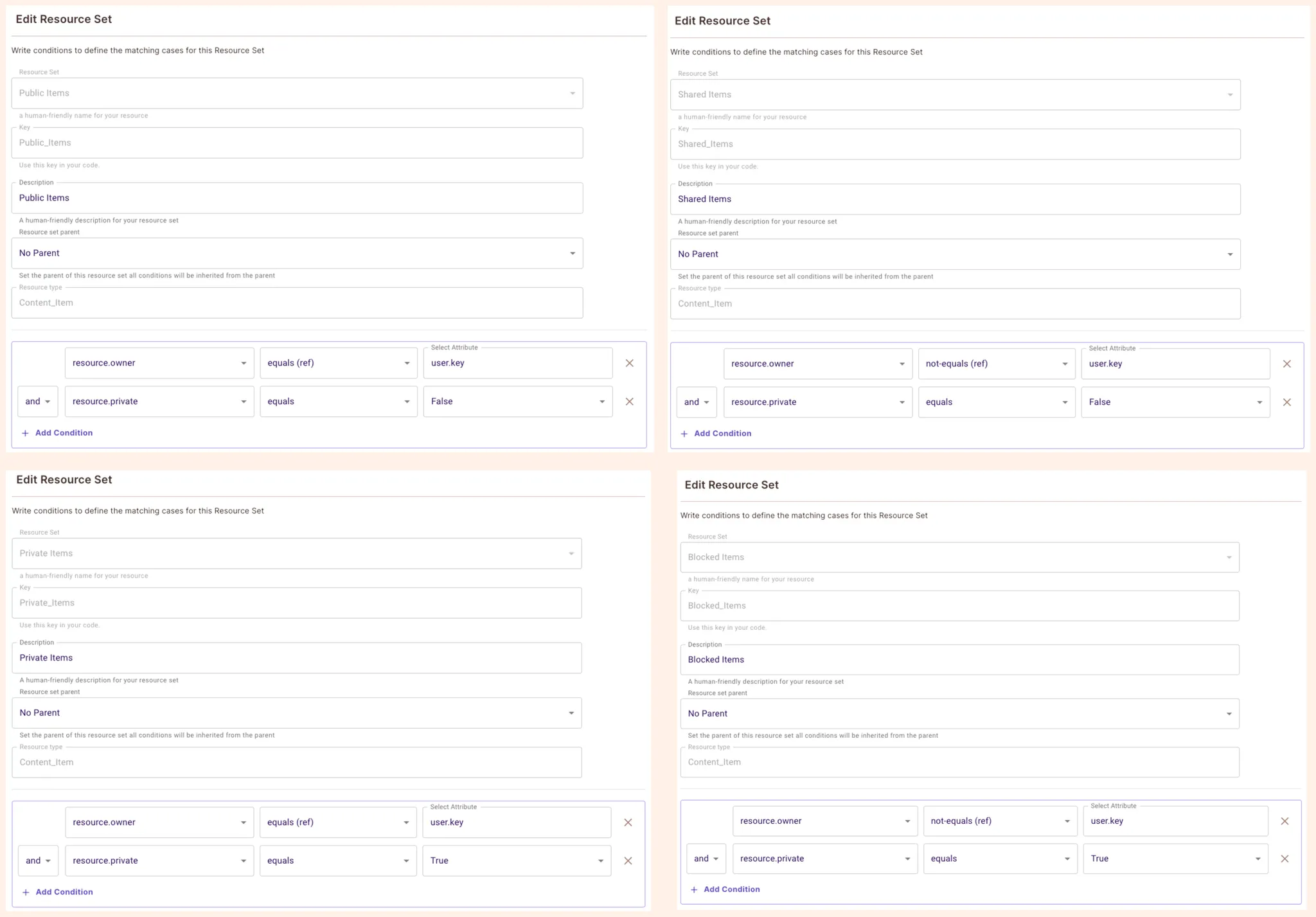

For the model, we will use an easily understandable data model of a CMS system, where we have content items with four levels of built-in access: Public, Shared, Private, and Blocked.

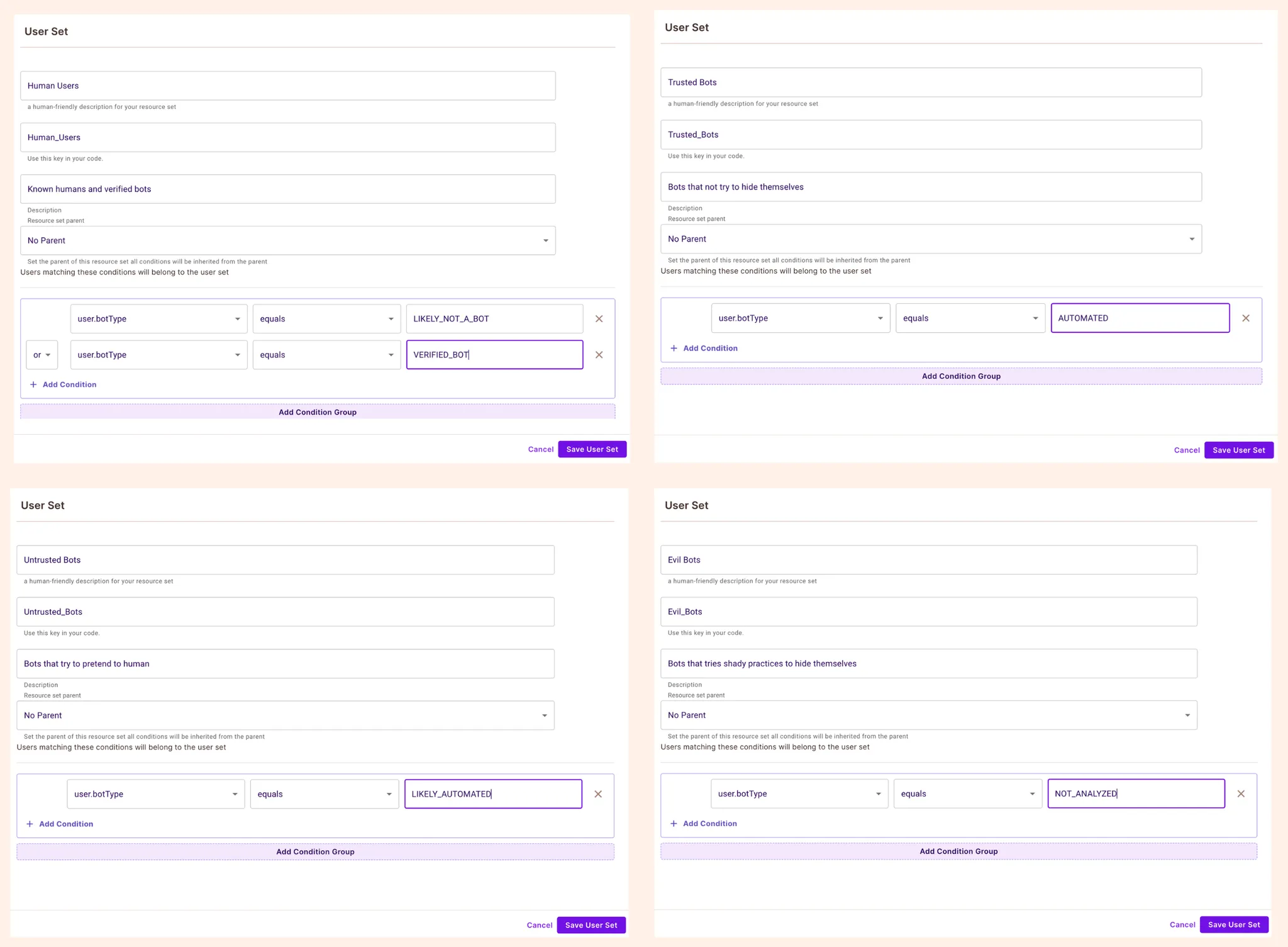

First, we would like to create segments for potential hybrid identities in our application. We will inherit Arcjet's direct model and configure five user sets that correlate to these five types of bots.

Login to Permit at app.permit.io (and create a workspace if you haven’t)

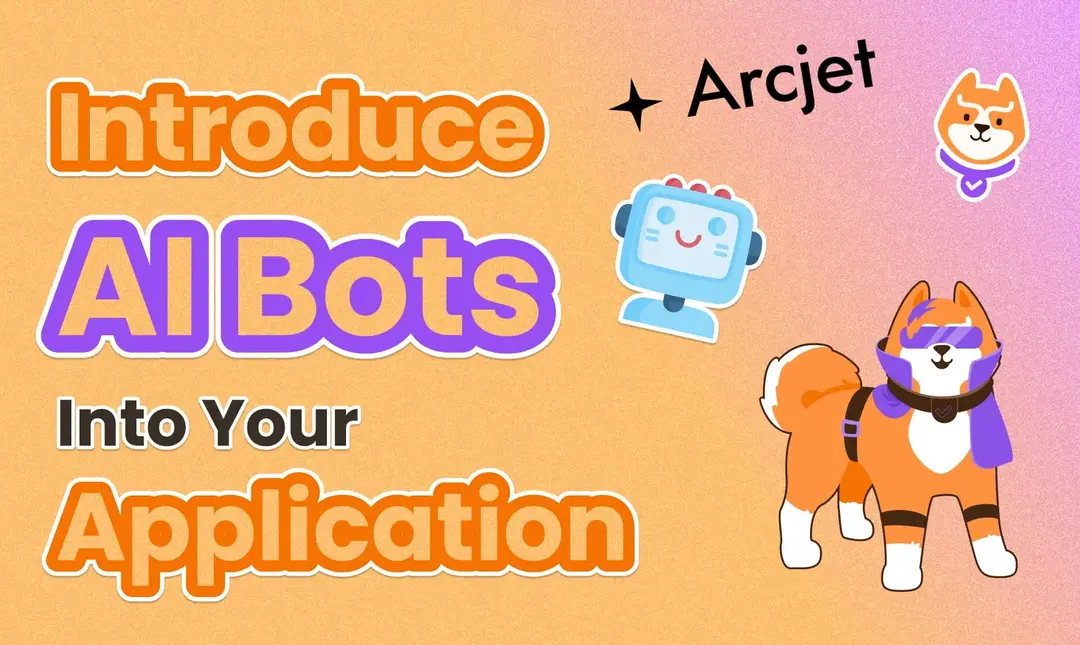

Go to Policy → Resources and create an Item resource with the following configuration

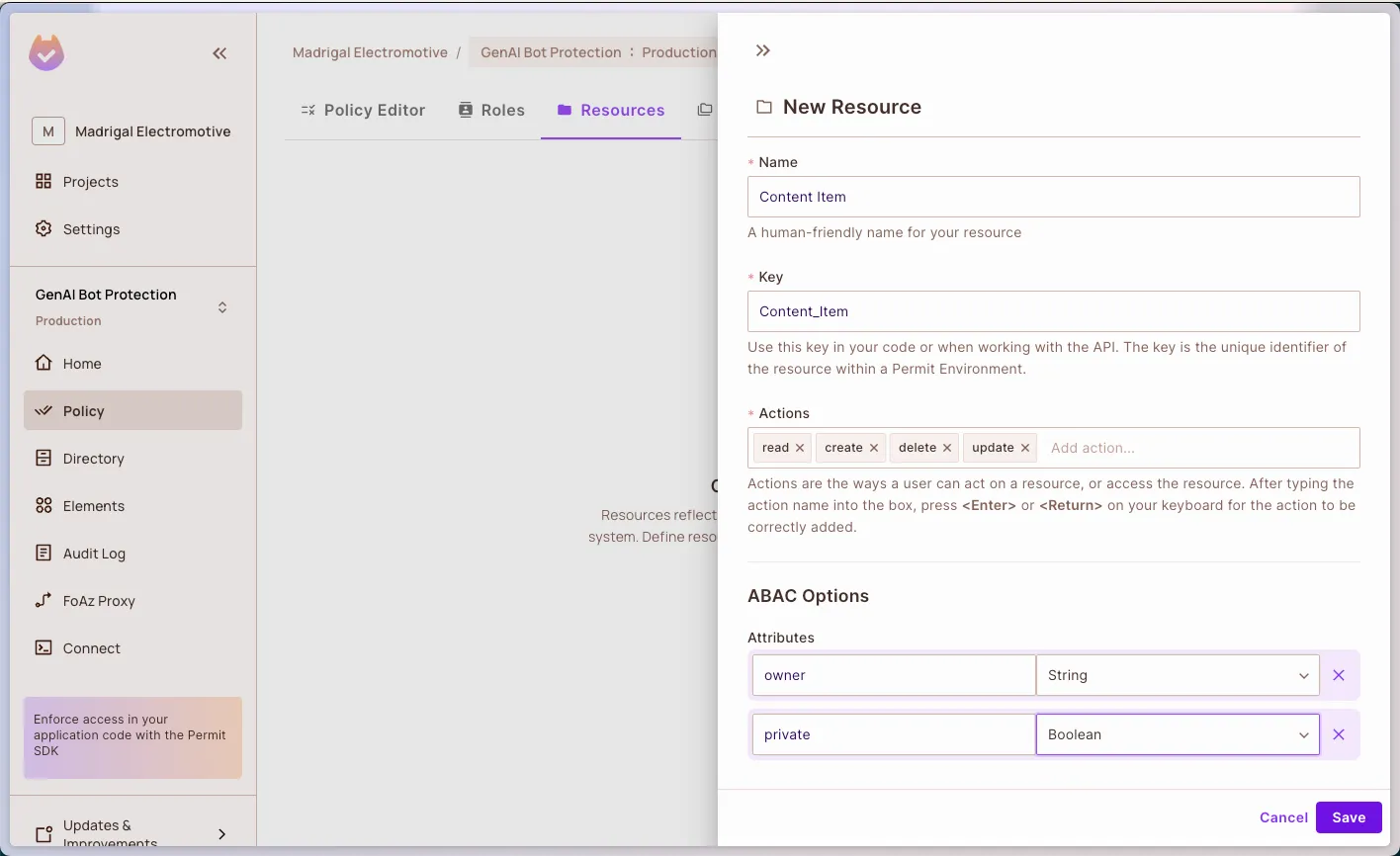

Now, we want to add a user attribute that allows for smart bot detection. Go to the Directory page click the Settings button on the top-right corner of the page, and choose User Attributes on the sidebar. Add the following attributes

Go back to the Policy screen, and navigate to ABAC Rules. Enable the ABAC options toggle, and click Create New on the User Set table. Create the following four user sets.

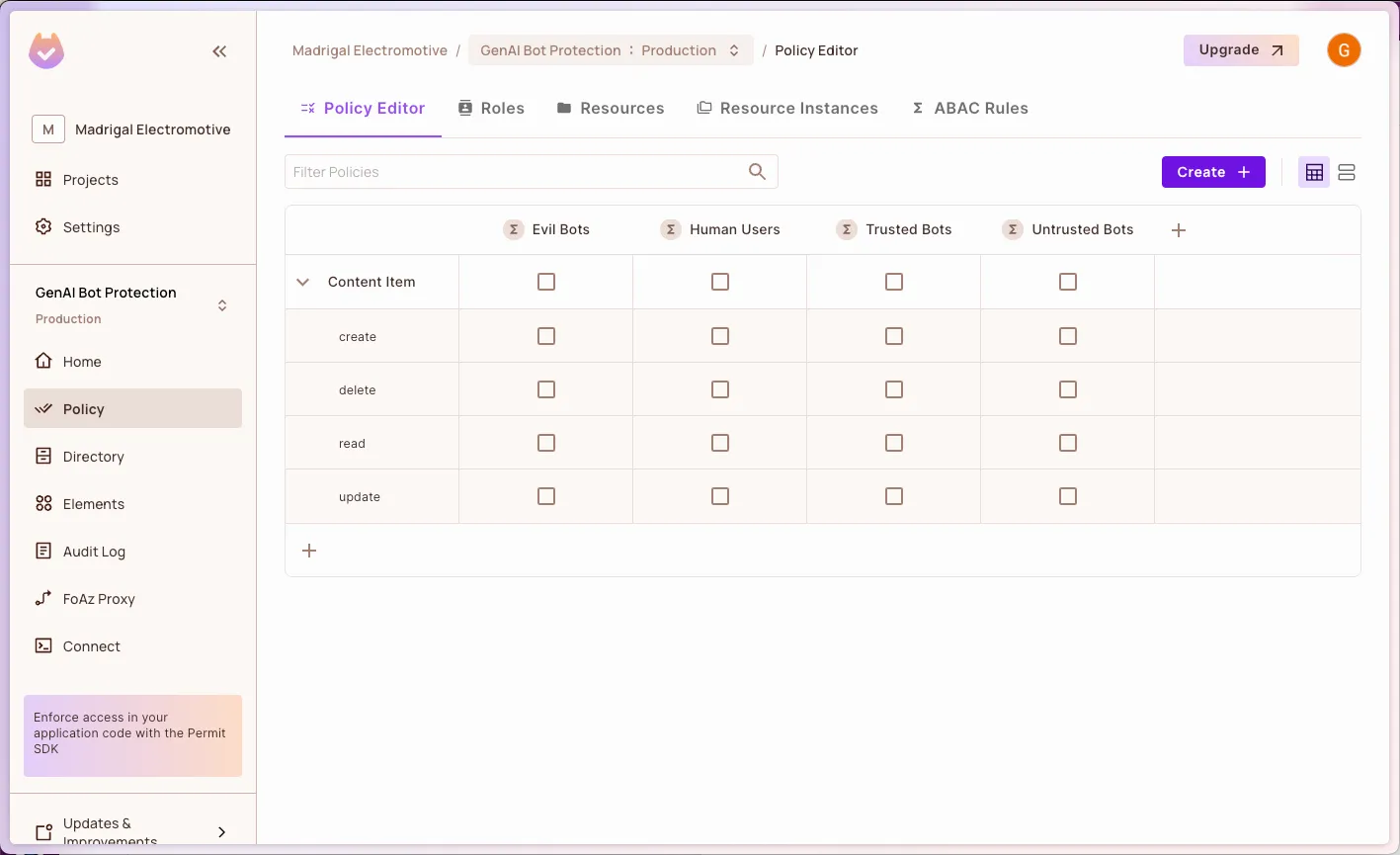

If we go back to the Policy screen now, we can see that our different user sets allow us to use the first dimensions of protection on our application.

Let’s now create the ranking on the other dimension with conditions.

Go to ABAC Rules → Create Resource Set and create four resource sets, one per item type

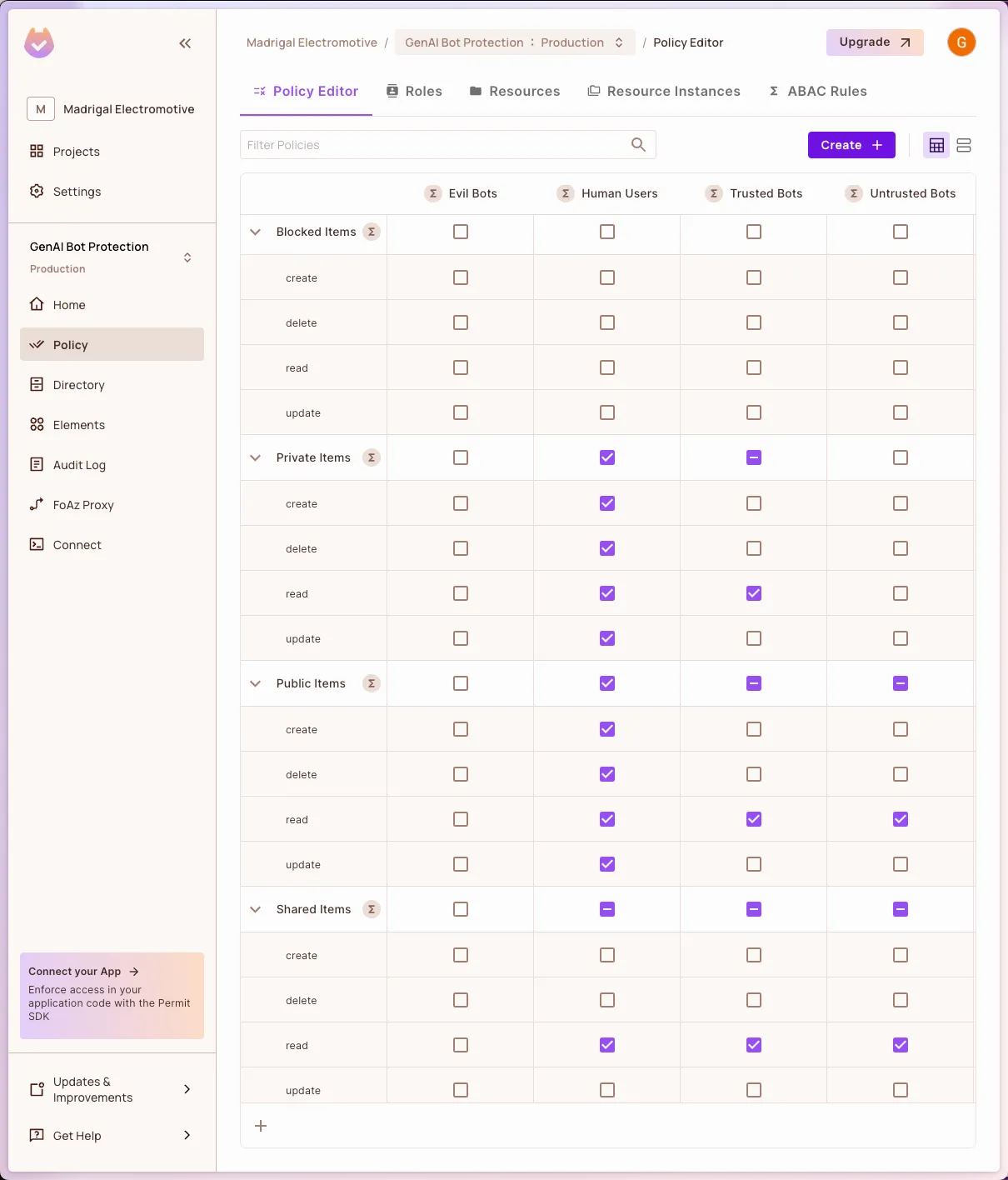

Go back to the Policy screen and ensure the following configuration

As you can easily see, we configured the following rules in the table:

This configuration actually stretched the “static” question of who to a dynamic multi-dimensional decision. With the policy model in mind, let’s run the project and see the dynamic enforcement in action.

In the following Node.js project, we utilized a very simple application where we saved three content items, one for every category we defined. From the user perspective, we haven’t implemented authentication but are using a hardcoded authentication token with the user ID on it.

All the code is available here - https://github.com/permitio/fine-grained-bot-protection

To run the project locally in your environment, please follow the steps described in the project’s Readme.md file

Let’s take a short look at the code to understand the basics of our authorization enforcement.

First, we have our endpoint to read documents from our “in-memory” database. This endpoint is fairly simple: It takes a hardcoded list of items, filters it by authorization level, and returns them to the user as plain text.

const ITEMS = [

{ id: 1, name: "Public Item", owner: USER, private: false, },

{ id: 2, name: "Shared Item", owner: OTHER_USER, private: false, },

{ id: 3, name: "Private Item", owner: USER, private: true, },

{ id: 4, name: "Blocked Item", owner: OTHER_USER, private: true, },

];

app.get("/", async (req, res, next) => {

const items = await authorizeList(req, ITEMS);

res

.type("text/plain")

.send(items.map(({ id, name }) => `${id}: ${name}`).join("\\r\\n"));

});

The authorize function that we are using here enforces permissions in two steps. First, it checks the bot rank of the particular request, and then it calls the check function on Permit with the real-time data of the Arcjet bot rank and the content item ID. With this context, the Permit’s PDP will return the relevant function.

const authorizeList = async (req, list) => {

// Get the bot detection decision from ArcJet

const decision = await aj.protect(req);

const isBot = decision.results.find((r) => r.reason.isBot());

const {

reason: { botType = false },

} = isBot;

// Check authorization for each item in the list

// For each user, we will add the botType attribute

// For each resource, we will add the item attributes

const authorizationFilter = await permit.bulkCheck(

list.map((item) => ({

user: {

key: USER,

attributes: {

botType,

},

},

action: "read",

resource: {

type: "Content_Item",

attributes: {

...item,

},

},

}))

);

return list.filter((item, index) => authorizationFilter[index]);

};

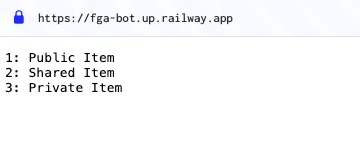

To test the application, you can use your local endpoint or use our deployed version at: https://fga-bot.up.railway.app/

Visit the application from the browser, you can read Public, Private, and Shared the items

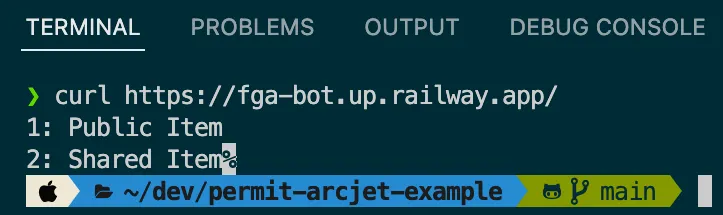

Try to run the following curl call from your terminal. Since this is a bot that does not try to pretend it isn't a bot, it will get the result as a trusted bot.

curl <https://fga-bot.up.railway.app/>

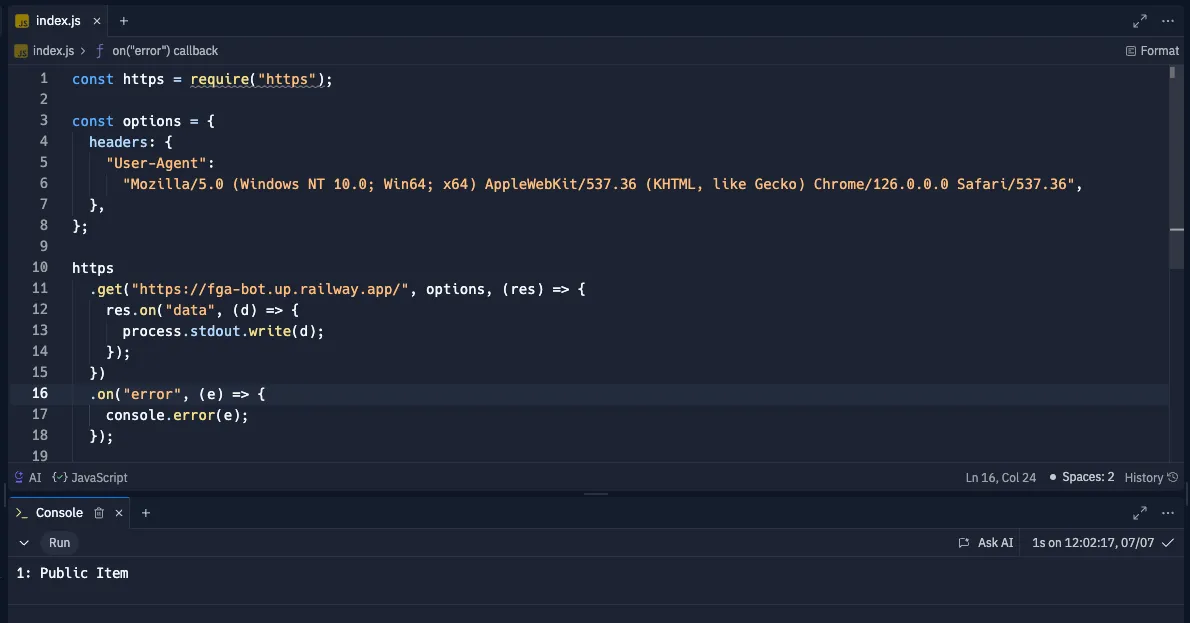

Now, let's try to run a bot from a cloud-hosted machine that pretends to be human by its user agent. To run the bot, access the following environment and run the code there.

https://replit.com/@gabriel271/Nodejs#index.js

As you can see, this bot will get only the public items

As you can see in this demo, we have a dynamic, context-aware system that ranks our hybrid identity in real-time and returns the relevant permissions as per activity.

Security challenges associated with non-human identities are threats that developers need to handle directly. Traditionally, security teams have been responsible for these concerns, but the complexity and nuances of hybrid identities require a developer-centric approach. Developers who understand the intricacies of their applications are better positioned to implement and manage these security measures effectively.

The only way to ensure our applications’ resilience is to use tools that give the developer an experience that seamlessly integrates into the software developer lifecycle. The tools we used in this article are great examples of how to deliver the highest standards of application security while speaking the language of developers.

With Permit.io, developers (and other stakeholders) can easily modify authorization policies to respond to emerging threats or changing requirements. For instance, we can adjust our rules to prohibit bots from reading shared data. This change can be made seamlessly within the Permit.io dashboard, instantly affecting the authorization logic without altering the application's codebase. All developers have to do is keep a very low footprint of enforcement functions in their applications.

With Arcjet, we are taking the usual work of bot protection, which is traditionally part of the environment setup and external to the code itself, into the application code. Using the Arcjet product-oriented APIs, developers can create a dynamic protection layer without the hassle of maintaining another cycle in the software development lifecycle.

The demo we show demonstrates a better situation where those tools work together without any software setup that is outside the core of product development.

In this article, we explored the evolving landscape of machine identities and the security challenges they present. We discussed the importance of decoupling application decision logic from code to maintain flexibility and adaptability. By leveraging dynamic authorization tools like Permit.io, we can enhance our application's security without compromising on developer experience.

To learn more about your possibilities with Permit’s fine-grained authorization, we invite you to take a deeper tour of the Permit UI and documentation and learn how to achieve fine-grained authorization in your application. We also invite you to visit the Arcjet live demos to experience the other areas where they can provide great application protection with few lines of code.

Full-Stack Software Technical Leader | Security, JavaScript, DevRel, OPA | Writer and Public Speaker