Least Privilege in AI Agents and Agentic Identity

- Share:

2938 Members

The shared ai-agent-prod service account is where the audit starts getting weird. It can read customer records, open support tickets, query the warehouse, update CRM notes, and call three internal tools whose owners left last year. Nobody remembers why it has all of those grants. The answer is usually some version of "the agent needed to complete the workflow," which is not an access model. It is a confession with YAML around it.

That pattern worked badly enough for service accounts. It gets much worse with agents because an agent is not just a process calling an API. It is a runtime making choices, composing tools, interpreting user intent, and sometimes delegating work to other agents. Treating that runtime like a slightly chatty backend service is the first mistake. The second mistake is thinking the fix is merely to make the credential expire faster. A five-minute credential in a compromised agent runtime is still a live credential. It just comes with a countdown timer.

Least privilege for agents needs a different unit of control. The security boundary cannot be "this workload owns this token." It has to be "this delegated identity may attempt this action, for this purpose, in this workflow, under these constraints, and only through an enforcement point that can say no."

Human access control has a relatively stable shape. A person has a role, belongs to groups, sits in an organization, and requests access to resources. Service account access control is less pleasant, but still legible: one workload calls another for a defined purpose. The permission may be too broad, but the actor is at least boring. Boring is underrated in security.

Agents are not boring.

An AI agent can begin with a narrow instruction — summarize this contract, create a renewal task, find risky clauses — and then discover that it needs document storage, CRM context, Slack history, a ticketing system, and maybe a database query. Each individual call can look defensible. The chain is where the risk hides. If the agent has broad credentials, the model's reasoning path becomes the real authorization layer, which is exactly as alarming as it sounds.

The old least-privilege trick was to predefine the smallest set of permissions a process needed. That assumes you know the process. With agents, the process may be assembled at runtime from model output, tool descriptions, user prompts, retrieved context, and intermediate results. The access decision cannot be made once at startup and then forgotten. It has to be evaluated at the moment of action.

Most teams notice this problem only when they try to audit the system. The logs show that ai-agent-prod exported a file, updated a record, and called a billing API. Technically true. Operationally useless. The actor was not merely the service account. The actor was a human delegation, a workflow, a purpose, a model decision, and a tool call. If the access system cannot see those dimensions, it cannot enforce least privilege. It can only decorate over-permission with better logging.

Agentic identity starts with a simple claim: the agent is not the root authority. It is acting on behalf of someone, inside a workflow, for a declared purpose. That means the identity of an agent action should carry at least three things: the delegating human, the workflow context, and the declared intent.

The delegating human matters because agents inherit authority; they do not manufacture it. If Maya in Customer Success can update renewal notes but cannot change billing terms, an agent acting for Maya should not magically become a finance administrator because the prompt got ambitious.

Workflow context matters because the same human can do different things in different situations. Reading a customer contract during a renewal review is not the same as bulk-exporting all contracts for analysis. Same user, same data class, very different risk profile. Access control that only sees the user misses the distinction that matters.

Declared intent matters because agents are probabilistic planners. They can drift. They can misunderstand. They can be manipulated. A system that captures the intended purpose of the task has something to compare behavior against. Without declared intent, "the model decided to" becomes a security primitive, which is not a primitive anyone should want in production.

A useful agentic identity envelope looks less like a bearer credential and more like a structured claim about delegated action:

{

"delegator_id": "user_7f3a91",

"tenant_id": "tenant_acme",

"session_id": "sess_2026_05_12_001",

"task_id": "task_contract_renewal_review_8842",

"purpose": "review_customer_contract_for_renewal_risk",

"allowed_tools": [

"contracts.read",

"crm.read",

"tickets.create"

],

"resource_constraints": {

"customer_id": "cust_acme_1042",

"contract_ids": ["contract_7781"],

"max_records": 25,

"data_classes": ["contract_metadata", "renewal_terms", "support_summary"]

},

"expires_at": "2026-05-12T18:30:00Z"

}

Notice what is not in the envelope: the real credential for any end service. No database password. No cloud credential. No OAuth token for the CRM. No API key hidden in a "temporary" field because someone got tired on a Friday. The envelope should identify the agentic action. It should not empower the agent to bypass the enforcement layer. Identity tells the system what the agent is allowed to ask for. It should not hand the agent raw power.

The first failure mode is the broad service account. It begins innocently: the agent needs five tools, so someone creates one credential that can use all five. Then the agent needs a sixth. Then another team reuses the same account because the integration already works. Six months later, the service account is a skeleton key with a friendly name.

The second failure mode is missing expiration. Long-lived permissions are already dangerous, but agents make the problem sharper because their behavior changes with prompts, tools, models, and retrieved context. A grant that made sense for yesterday's workflow may be absurd for today's. If delegation has no end, it is not delegation. It is abandonment.

The third failure mode is intent drift. An agent starts with "prepare a renewal summary" and ends up pulling unrelated support tickets, checking payment history, or drafting outbound email to the customer. Drift often looks like helpfulness until it crosses a boundary. Least privilege has to recognize not only whether a tool call is allowed, but whether it still fits the declared purpose of the task.

The fourth failure mode is multi-agent chain collapse. One agent asks another to enrich data. That agent calls a third to classify risk. A fourth writes results. If every downstream agent inherits the original authority without reduction, the chain becomes a privilege amplifier. The last agent in the chain may be farthest from the user's intent but still carrying the broadest effective power.

The bad fix is to sprinkle short-lived end-service tokens everywhere and declare victory. Short-lived tokens are better than standing keys — they reduce the duration of exposure. But if the agent runtime is compromised while the token is valid, the attacker still has a live credential for the next N minutes. The blast radius is smaller, but it still exists.

The architecture agentic systems actually need is stricter: an identity envelope for delegated intent, a policy decision point that evaluates every meaningful action, an enforcement gateway that mediates tool calls, and a credential vault that keeps real end-service credentials out of the agent runtime entirely. The agent can propose. It should not possess.

This is where Permit.io's model becomes concrete. The agent carries an identity token — the agentic identity envelope — that describes delegation, workflow context, declared intent, allowed tools, resource constraints, and expiry. That token authorizes the agent to ask the Permit MCP Gateway to perform an action. It is not a bearer credential for the end service.

The Permit MCP Gateway acts as both the enforcement point and the vault for real end-service credentials. API keys, OAuth tokens, database passwords, and cloud credentials stay vaulted at the gateway. The agent never receives them. When the agent asks to call a tool, the gateway sends the authorization question to the Permit PDP — the Policy Decision Point — which runs inside the customer's VPC. If policy allows the action, the gateway uses its vaulted credential to call the end service on the agent's behalf.

That is zero standing privileges in the Permit context. Not "the agent gets a short-lived token." Not "the agent gets a narrower credential." The agent gets no real end-service credential at all.

This matters because compromise math changes. In a temporal-token model, a compromised agent runtime exposes a live credential until it expires. In the gateway-vault model, a compromised runtime exposes only the identity envelope — useless without going through the Permit MCP Gateway, where policy is still evaluated. The attacker can ask. The gateway can still refuse. That is a much better sentence to say during an incident review.

The policy layer cannot be a toy. Agents need more than one style of authorization because agentic work crosses organizational, contextual, and relationship boundaries. RBAC handles coarse role grants. ABAC evaluates attributes such as tenant, data class, time, purpose, and risk. ReBAC handles relationship-based access: account owner, assigned CSM, case participant, document collaborator. Policy languages such as Cedar, Rego, and Polar matter because serious teams already have policy logic, and nobody wants to rewrite their security model from scratch.

Permit's Guardian Agents add the observability layer: policy-constrained observers that analyze agent behavior, detect intent drift, and help humans improve policy as systems grow faster than manual review can track. The guardian itself must operate under policy constraints. Otherwise, the thing watching the blast radius becomes part of it.

The line worth keeping: the model proposes; policy decides. The model is good at planning. Policy is good at saying "not for this user, not for this tenant, not for this purpose, not with that data class, not from this workflow." Prompts are instructions. Policy is enforcement. Mixing those jobs is where security problems begin.

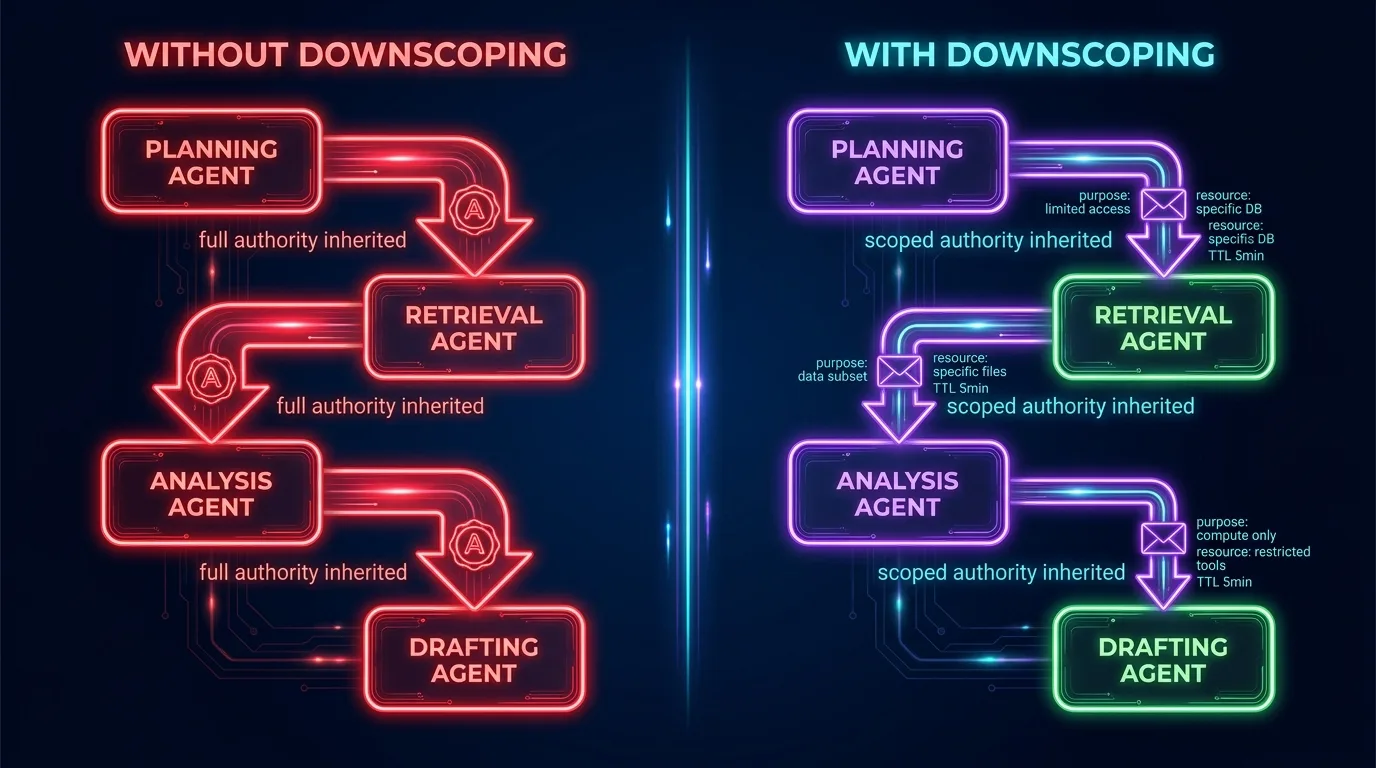

Multi-agent systems make least privilege more interesting because delegation becomes recursive. The first agent is already acting on behalf of a human. When it delegates to another agent, the downstream agent should not inherit the full original envelope. It should receive a downscoped identity: narrower tools, narrower resources, narrower purpose, and a clearer task boundary.

A renewal-review agent may be allowed to read one customer contract, inspect related CRM fields, and create an internal ticket. If it delegates to a clause-classification agent, that second agent does not need CRM access or ticket creation rights. It needs the relevant contract excerpt and permission to return a classification. This is least privilege as a relay race, not a family inheritance.

Downscoping also gives each agent a policy identity of its own. A code-execution agent, a data-retrieval agent, and an email-drafting agent should not be treated as interchangeable workers in the same runtime. Each should operate under constraints matching its tools and failure modes.

The deeper point is that least privilege in agentic systems is not a static permission set. It is a continuous narrowing of authority as work becomes more specific. The closer an agent gets to an actual tool call, the more concrete the authorization decision should become: which tenant, which record, which data class, which action, which purpose, which delegated human, which downstream agent. Vague authority should decay into precise authority, or it should not be authority at all.

Agents will keep getting better at deciding what to do next. The access model has to be built around the assumption that sometimes they will be wrong, sometimes they will be manipulated, and sometimes the runtime will fail. Least privilege is not there to slow the agent down. It is there so the system can let agents act without pretending they are harmless.

Agentic identity is the identity of an AI agent action, not just the name of the agent runtime. It combines the delegating human, the workflow context, and the declared intent of the task. That gives the authorization system enough information to decide whether a specific tool call should be allowed — and to detect when the action no longer fits the task it was delegated to perform.

Service accounts collapse many different actions into one broad technical identity, which hides who delegated the work, what the agent was trying to do, which tenant was involved, and whether the action matched the task. The result is usually over-permission that looks convenient until an audit or incident exposes how wide the blast radius actually was.

Short-lived end-service tokens are better than permanent credentials, but they do not eliminate the core risk. If the agent runtime is compromised while the token is valid, the attacker still has a live credential. The stricter model is zero standing privileges, where the agent never holds the real service credential at all and must go through a policy-enforcing gateway that vaults those credentials itself.

Zero standing privileges means the agent does not carry API keys, OAuth tokens, database passwords, or cloud credentials for the services it uses. It carries only an identity envelope that authorizes it to request an action through an enforcement gateway. The gateway holds the real credentials and uses them only after policy allows the specific action — so a compromised agent runtime yields nothing usable to an attacker.

A gateway vault reduces blast radius by keeping end-service credentials out of the agent runtime entirely. If the runtime is compromised, the attacker gets only the agent's identity token, which is useless without going through the enforcement gateway. Any attempted action still has to pass policy checks at the gateway, where it can still be denied.

Intent drift happens when an agent's behavior moves away from the task it was originally delegated to perform. The agent may still be taking actions that look useful, but those actions no longer match the declared purpose. Detecting drift requires comparing behavior against structured task context — not trusting the model's explanation — and ideally blocking the out-of-scope action before it executes.

Permissions in multi-agent systems should be downscoped at each delegation step. A downstream agent should receive only the tools, resources, and purpose needed for its specific subtask — not the full authority of the originating principal. Passing full authority through the chain turns delegation into privilege amplification, which is the opposite of least privilege.

RBAC handles broad role-based permissions, ABAC adds context such as tenant, purpose, data class, and risk score, and ReBAC handles relationship-based access such as account ownership or document collaboration. Agent authorization typically needs all three because agent actions are contextual and delegated. Policy languages such as Cedar, Rego, and Polar make those rules explicit enough to enforce outside the model.

Co-Founder / CEO at Permit.io